The push to crack autonomous driving has produced a stark split in strategy. Most automakers and tech firms back sensor fusion, combining cameras, radar, and LiDAR for layered redundancy. Tesla, by contrast, has pursued a single-sensor path centered on cameras, even removing and disabling radar in its vehicles. To see why, it helps to understand what Tesla chose not to use.

What is Sensor Fusion?

Sensor fusion seeks to merge complementary inputs from different sensor types into one unified model of a vehicle’s surroundings. Each sensor has strengths and trade-offs, and fusing them aims to offset individual weaknesses.

Cameras deliver the richest, highest-resolution view, capturing color and texture, reading signs, recognizing traffic light colors, and interpreting complex visual context. However, they can be impaired by poor lighting and adverse weather, and they struggle to directly measure relative velocity.

Radar excels at measuring distance and velocity, including in rain, fog, and snow. Its drawback is low resolution. Matching the resolution of a single camera in one direction would require a 12-foot by 12-foot square radar array costing millions. Radar reliably indicates that something is present and how fast it’s moving—as long as it is moving—but it has difficulty identifying object types and reliably detecting stationary objects.

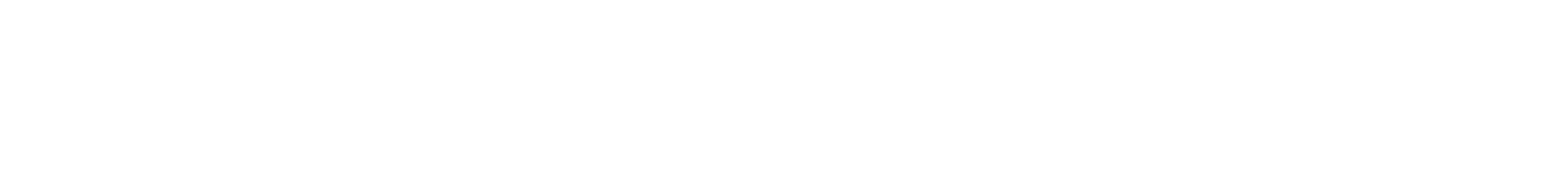

LiDAR, which uses lasers, builds a precise 3D point cloud and measures distance and shape with high accuracy, enabling detailed 3D environment models. Its main downsides are relatively high sensor cost and degraded performance in fog, snow, and rain. It also generates such large volumes of data that simply organizing the input demands immense computation.

Companies such as Waymo and Cruise adopt the established approach of fusing cameras, radar, and LiDAR to provide redundancy.

Where Tesla Started: A Multi-Sensor Approach

Tesla did not begin with vision only. Early Autopilot systems, through 2021, paired cameras with a forward-facing radar sourced from automotive suppliers like Bosch. In that conventional fusion setup, radar was the primary source for measuring a lead vehicle’s distance and speed, enabling features such as Traffic-Aware Cruise Control and early iterations of FSD Beta.

For years, this multi-sensor design was the norm, and the expectation was that radar would remain a core safety backstop as Tesla advanced its custom FSD hardware. Then, in 2021, Tesla pivoted.

The Pivot: Why Tesla Abandoned Radar

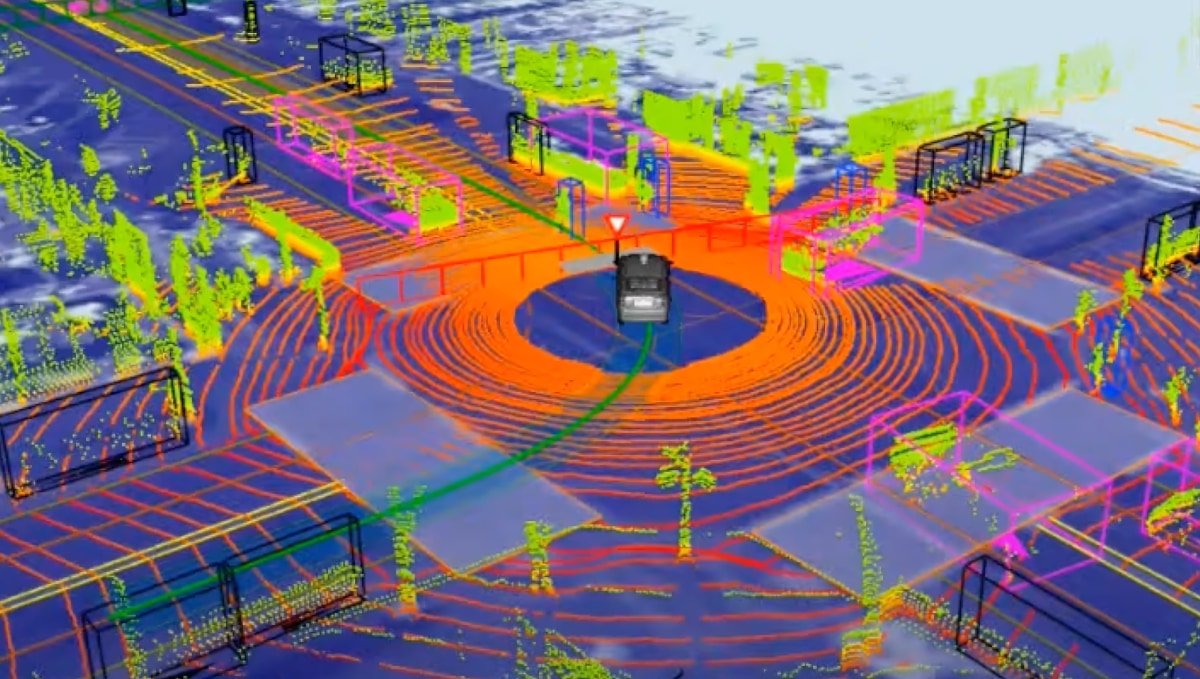

In the summer of 2021, Tesla announced it would remove the radar from new Model 3 and Model Y vehicles and shift to a camera-only system called Tesla Vision. The move followed Elon Musk’s first-principles argument that conflicting sensor inputs can undermine safety.

Lidar and radar reduce safety due to sensor contention. If lidars/radars disagree with cameras, which one wins?

— Elon Musk (@elonmusk) August 25, 2025

This sensor ambiguity causes increased, not decreased, risk. That’s why Waymos can’t drive on highways.

We turned off the radars in Teslas to increase safety.…

In this view, sensor fusion introduces sensor contention: when inputs disagree, the system must decide which to trust. Should priority be fixed ahead of time, or chosen on the fly? That ambiguity can paralyze decision-making in safety-critical moments.

Tesla’s FSD engineers have cited practical weaknesses. Tesla AI Engineer Yun-Ta Tsai noted that radar struggles to differentiate stationary objects that do not produce frequency shifts, objects with thin cross-sections, and objects with low radar reflectivity. These limitations contributed to past phantom braking incidents in which a car could misinterpret a stationary overpass or a discarded aluminum can as a stopped vehicle.

From Tesla’s perspective, the scalable route to autonomy is to master vision. Humans drive with two cameras and a neural network, and if computer vision can be solved robustly, other sensors at best add cost and complexity and at worst introduce hazardous ambiguity.

Where We Are Today: The Vision on Vision

Today, every new Tesla relies solely on Tesla Vision and its eight cameras. A sophisticated neural network constructs a 3D vector-space representation of the environment, which the vehicle then analyzes and navigates within.

There is a notable footnote. When Tesla launched its Hardware 4 (now AI4), new Model S and Model X vehicles included a high-definition radar. Tesla has not activated these radars for use in FSD.

FSD is actually most advanced on the Model Y, Tesla’s most common vehicle, rather than on models with the extra sensor. While Tesla likely gathers some data from those radars and validates performance, they are not part of the FSD suite.

A Binary Outcome

Abandoning sensor fusion is the clearest differentiator between Tesla’s approach and the rest of the industry. It is a high-stakes, all-or-nothing bet—and so far, Tesla appears to be ahead.

Tesla, Elon, Ashok, and the Tesla AI team maintain that the only path to a scalable, general-purpose autonomous system with human-like intelligence is to fully solve vision. If that bet pays off, the resulting system could be far cheaper and vastly more scalable than rivals’ expensive, sensor-heavy designs.

If the bet fails, Tesla may encounter a performance ceiling that requires those very sensors. To date, there has been no clear sign of such a ceiling. Tesla remains fully committed to a vision-only system, and its progress and capability are evident.

Share:

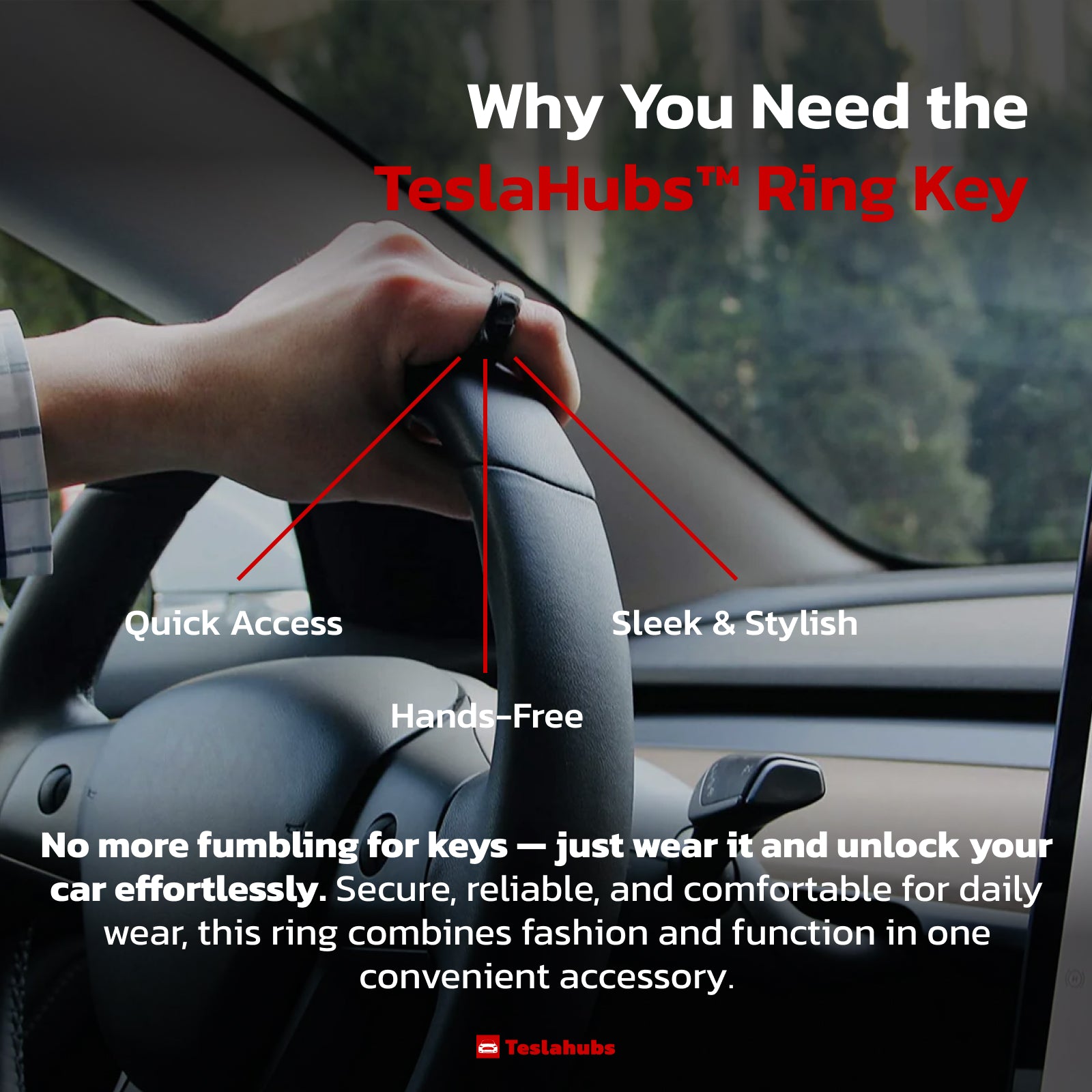

How to Use a Single Key Card to Open Multiple Teslas

Software Update 2025.45.10 — Release Notes